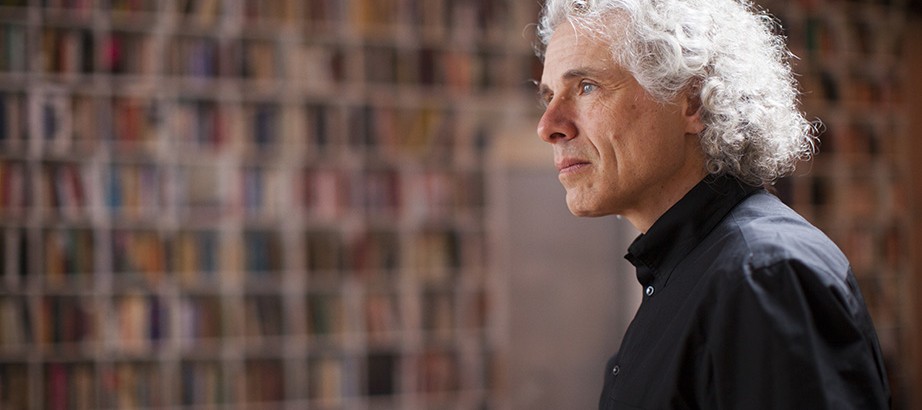

Steven Pinker’s history of thought

Steven Pinker follows Sara Lawrence-Lightfoot, Martha Minow, and E.O. Wilson in the Experience series, interviews with Harvard faculty members covering the reasons they became teachers and scholars, and the personal journeys, missteps included, behind their professional success. Interviews with Melissa Franklin, Stephen Greenblatt, Laurel Thatcher Ulrich, Helen Vendler, and Walter Willett will appear in coming weeks.

The brain is Steven Pinker’s playground. A cognitive scientist and experimental psychologist, Pinker is fascinated by language, behavior, and the development of human nature. His work has ranged from a detailed analysis of how the mind works to a best-seller about the decline in violence from biblical times to today.

Raised in Montreal, Pinker was drawn early to the mysteries of thought that would drive his career, and shaped in part by coming of age in the ’60s and early ’70s, when “society was up for grabs,” it seemed, and nature vs. nurture debates were becoming more complex and more heated.

His earliest work involved research in both visual imagery and language, but eventually he devoted himself to the study of language development, particularly in children. His groundbreaking 1994 book “The Language Instinct” put him firmly in the sphere of evolutionary psychology, the study of human impulses as genetically programmed and language as an instinct “wired into our brains by evolution.” Pinker, 59, has spent most of his career in Cambridge, and much of that time at Harvard — first for his graduate studies, later as an assistant professor. He is the Johnstone Family Professor of Psychology.

Q: Can you tell me about your early life? Where did you grow up and what did your parents do?

A: I grew up in Montreal, as part of the Jewish minority within the English-speaking minority within the French-speaking minority in Canada. This is the community that gave the world Leonard Cohen, who my mother knew, and Mordecai Richler, who my father knew, together with William Shatner, Saul Bellow, and Burt Bacharach. I was born in 1954, the peak year of the baby boom. My grandparents came to Canada from Eastern Europe in the 1920s, I surmise, because in 1924 the United States passed a restrictive immigration law. I can visualize them looking at a map and saying “Damn, what’s the closest that we can get to New York? Oh, there’s this cold place called Canada, let’s try that.” Three were from Poland, one from what is now Moldova. My parents both earned college degrees. My father had a law degree, but for much of his career did not practice law. He worked as a sales representative and a landlord and owned an apartment-motel in Florida. But he reopened his law practice in his 50s, and retired at 75. Like many women of her generation, my mother was a homemaker through the ’50s and ’60s. In the 1970s she got a master’s degree in counseling, then got a job and later became vice principal of a high school in Montreal.

I went to public schools in the suburbs of Montreal, and then to McGill University, which is also where my parents went. I came to Harvard in 1976 for graduate school, got my Ph.D. from this [psychology] department in 1979, went to MIT to do a postdoc, and came back here as an assistant professor in 1980. It was what they called a folding chair, since in those years Harvard did not have a genuine tenure track. I was advised to take the first real tenure-track job that came my way, and that happened within a few months, so I decamped for Stanford after just one year here. Something in me wanted to come back to Boston, so I left Stanford after a year and I was at MIT for 21 years before returning to Harvard ten and a half years ago. This is my third stint at Harvard.

Q: Were your parents instrumental in your choice of a career?

A: Not directly, other than encouraging my intellectual growth and expecting that I would do something that would make use of my strengths.

Q: What were those strengths?

A: My parents wanted me to become a psychiatrist, given my interest in the human mind, and given the assumption that any smart, responsible young person would go into medicine. They figured it was the obvious career for me. The 1970s was a decade in which the academic job market had collapsed. There were stories in The New York Times of Ph.D.s driving taxis and working in sheriff’s offices, and so they thought that a Ph.D. would be a ticket to unemployment — some things don’t change. They tried to reason with me: “If you become a psychiatrist, you get to indulge your interest in the human mind, but you also always have a job. You can always treat patients.” But I had no interest in pursuing a medical degree, nor in treating patients. Psychopathology was not my primary interest within psychology. So I gambled, figuring that if the worst happened and I couldn’t get an academic job I would be 25 years old and could do something else. Also, I chose a field — cognitive psychology — that I knew was expanding. I expected that psychology departments would be converting slots in the experimental analysis of behavior, that is, rats and pigeons being conditioned, to cognitive psychology. And that’s exactly what happened. Fortunately, I got three job offers in three years at three decent places. My parents were relieved, not to mention filled with naches.

‘It is a failing of human nature to detest anything that young people do just because older people are not used to it or have trouble learning it.’

Q: I read that an early experience with anarchy got you intrigued about the workings of the mind. Can you tell me more about that?

A: I was too young for ’60s campus activism; I was in high school when all of the excitement happened. But it was very much the world I lived in. The older siblings of my friends were college students, and you couldn’t avoid the controversies of the ’60s if you read the newspaper and watched TV. In the ’60s everyone had to have a political ideology. You couldn’t get a date unless you were a Marxist or an anarchist. Anarchism seemed appealing. I had a friend who had read Kropotkin and Bakunin and he persuaded me that human beings are naturally generous and cooperative and peaceful. That’s just the rational way to be if you didn’t have a state forcing you to delineate your property and separate it from someone else’s. No state, no property, nothing to fight over . . . I’d have arguments over the dinner table with my parents, and they said that if the police ever disappeared, all hell would break loose. Being 14 years old, of course I knew better, until an empirical test presented itself.

Quebec is politically and economically very Gallic: Sooner or later, every public sector goes on strike. One week it’s the garbage collectors, another week the letter carriers. Then one day the police went on strike. They simply did not show up for work one morning. So what happened? Well, within a couple of hours there was widespread looting, rioting, and arson — not one but two people were shot to death, until the government called in the Mounties to restore order. This was particularly shocking in Montreal, which had a far lower rate of violent crime than American cities. Canadians felt morally superior to Americans because we didn’t have the riots and the civil unrest of the 1960s. So to see how quickly violent anarchy could break out in the absence of police enforcement was certainly, well, informative. As so often happens, long-suffering mom and dad were right, and their smart-ass teenage son was wrong. That episode also gave me a taste of what it’s like to be a scientist, namely that cherished beliefs can be cruelly falsified by empirical tests.

I wouldn’t say it’s that incident in particular that gave me an interest in human nature. But I do credit growing up in the ’60s, when these ideas trickled down, and the early ’70s, which were an extension of the ’60s. Debates on human nature and its political implications were in the air. Society was up for grabs. There was talk of revolution and rationally reconstructing society, and those discussions naturally boiled down to rival conceptions of human nature. Is the human psyche socially constructed by culture and parenting, or is there even such a thing as human nature? And if there is, what materials do we have to work with in organizing a society? In college I took a number of courses that looked at human nature from different vantage points: anthropology, sociology, psychology, literature, philosophy. But psychology appealed to me because it seemed to ask profound questions about our kind, but it also offered the hope that the questions could be answered in the lab. So it had just the right mixture of depth and tractability.

Q: You started your career interested in the visual realm as well as in language, but eventually you chose to focus your energies on your work with language. Why?

A: Starting from graduate school I pursued both. My Ph.D. thesis was done under the supervision of Stephen Kosslyn, who later became chair of this department, then dean of social science until he left a couple of years ago to become provost of Minerva University. My thesis was on visual imagery, the ability to visualize objects in the mind’s eye. At the same time, I took a course with Roger Brown, the beloved social psychologist who was in this department for many years. In yet another course I wrote a theoretical paper on language acquisition, which took on the question “How could any intelligent agent make the leap from a bunch of words and sentences in its input to the ability to understand and produce an infinite number of sentences in the language from which they were drawn?” That was the problem that Noam Chomsky set out as the core issue in linguistics.

So I came out of graduate school with an interest in both vision and language. When I was hired back at Harvard a year after leaving, I was given responsibility for three courses in language acquisition. In the course of developing the lectures and lab assignments I started my own empirical research program on language acquisition. And I pursued both projects for about 10 years until the world told me that it found my work on language more interesting than my work on visual cognition. I got more speaking invitations, more grants, more commentary. And seeing that other people in visual cognition like Ken Nakayama, my colleague here, were doing dazzling work that I couldn’t match, whereas my work on language seemed to be more distinctive within its field — that is, there weren’t other people doing what I was doing — I decided to concentrate more and more on language, and eventually closed down my lab in visual cognition.

Q: Did you have any doubts when you were starting out in your career?

A: Oh, absolutely. I was terrified of ending up unemployed. When I got to Harvard, the Psychology Department, at least the experimental program in the Psychology Department, was extremely mathematical. It specialized in a sub-sub-discipline called psychophysics, which was the oldest part of psychology, coming out of Germany in the late 19th century. William James, the namesake of this building, said “the study of psychophysics proves that it is impossible to bore a German.” Now, I’m interested in pretty much every part of psychology, including psychophysics. But this was simply not the most exciting frontier in psychology, and even though I was good in math, I didn’t have nearly as much math background as a hardcore psychophysicist, and I wondered whether I had what it took to do the kind of psychology being done here. But it was starting to become clear — even at Harvard — that mathematical psychophysics was becoming increasingly marginalized, and if it wanted to keep up, Harvard had to start hiring in cognitive psychology. They hired Steve Kosslyn, we immediately hit it off, and I felt much more at home.

Q: If you were trying to get someone interested in this field today, what would you say?

A: What could be more interesting than how the mind works? Also, I believe that psychology sits at the center of intellectual life. In one direction, it looks to the biological sciences, to neuroscience, to genetics, to evolution. But in the other, it looks to the social sciences and the humanities. Societies are formed and take their shape from our social instincts, our ability to communicate and cooperate. And the humanities are the study of the products of our human mind, of our works of literature and music and art. So psychology is relevant to pretty much every subject taught at a university.

Psychology is blossoming today, but for much of its history it was dull, dull, dull. Perception was basically psychophysics, the study of the relationship between the physical magnitude of stimulus and of its perceived magnitude — that is, as you make a light brighter and brighter, does its subjective brightness increase at the same rate or not? It also studied illusions, like the ones on the back of the cereal box, but without much in the way of theory. Learning was the study of the rate at which rats press levers when they are rewarded with food pellets. Social psychology was a bunch of laboratory demonstrations showing that people could behave foolishly and be mindless conformists, but also without a trace of theory explaining why. It’s only recently, in dialogue with other disciplines, that psychology has begun to answer the “why” questions. Cognitive science, for example, which connects psychology to linguistics, theoretical computer science, and philosophy of mind, has helped explain intelligence in terms of information, computation, and feedback. Evolutionary thinking is necessary to ask the “why” questions: “Why does the mind work the way it does instead of some other way in which it could have worked?” This crosstalk has made psychology more intellectually satisfying. It’s no longer just one damn phenomenon after another.

Q: Is there a single work that you are most proud of?

A: I am proud of “How the Mind Works” for its sheer audacity in trying to explain exactly that, how the mind works, between one pair of covers. At the other extreme of generality, I’m proud of a research program I did for about 15 years that culminated in “Words and Rules,” a book about, of all things, irregular verbs, which I use as a window onto the workings of cognition. I’m also fulfilled by having written my most recent book, “The Better Angels of Our Nature,” which is about something completely different: the historical decline of violence and its causes, a phenomenon that most people are not even aware of, let alone have an explanation for. In that book, I first had to convince readers that violence has declined, knowing that the very idea strikes people as preposterous, even outrageous. So I told the story in 100 graphs, each showing a different category of violence: tribal warfare, slavery, homicide, war, civil war, domestic violence, corporal punishment, rape, terrorism. All have been in decline. Having made this case, I returned to being a psychologist, and set myself the task of explaining how that could have happened. And that explanation requires answering two psychological questions: “Why was there so much violence in the past?” and “What drove the violence down?” For me, the pair of phenomena stood as a corroboration of an idea I have long believed; mainly that human nature is complex. There is no single formula that explains what makes people tick, no wonder tissue, no magical all-purpose learning algorithm. The mind is a system of mental organs, if you will, and some of its components can lead us to violence, while others can inhibit us from violence. What changed over the centuries and decades is which parts of human nature are most engaged. I took the title, “The Better Angels of Our Nature,” from Abraham Lincoln’s first inaugural. It’s a poetic allusion to the idea that there are many components to human nature, some of which can lead to cooperation and amity.

Q: I read a newspaper article in which you talked about the worst thing you have ever done. Can you tell me about that?

A: It was as an undergraduate working in a behaviorist lab. I carried out a procedure that turned out to be tantamount to torturing a rat to death. I was asked to do it, and against my better judgment, did it. I knew it had little scientific purpose. It was done in an era in which there was no oversight over the treatment of animals in research, and just a few years later it would have been inconceivable. But this painful episode resonated with me for two reasons. One is that it was a historical change in a particular kind of violence that I lived through, namely the increased concern for the welfare of laboratory animals. This was one of the many developments I talk about in the “The Better Angels of Our Nature.” Also, as any psychology student knows, humans sometimes do things against their own conscience under the direction of a responsible authority, even if the authority has no power to enforce the command. This is the famous Milgram experiment, in which people were delivering what they thought were fatal shocks to subjects pretending to be volunteers. I show the film of the Milgram experiment to my class every year. It’s harrowing to watch, but I’ve seen it now 17 times and found it just as gripping the 17th time as the first. There was a lot of skepticism that people could possibly behave that way. Prior to the experiment, a number of experts were polled for their prediction as to what percentage of subjects would administer the most severe shock. The average of the predictions was on the order of one-tenth of one percent. The actual result was 70 percent. Many people think there must be some trick or artifact, but having behaved like Milgram’s 70 percent myself, despite thinking of myself as conscientious and morally concerned, I believe that the Milgram study reveals a profound and disturbing feature of human psychology.

Pinker, at his Boston home, might someday add photography to his list of book topics.

Q: What would you say is your biggest flaw as a scholar? What about your greatest strength?

A: That’s for other people to judge! I am enough of a psychologist to know that any answer I give would be self-serving. La Rochefoucauld said, “Our enemies’ opinions of us come closer to the truth than our own.”

Q: As an expert in language, what do you think of Twitter?

A: I was pressured into becoming a Twitterer when I wrote an op-ed for The New York Times saying that Google is not making us stupid, that electronic media are not ruining the language. And my literary agent said, “OK, you’ve gone on record saying that these are not bad things. You better start tweeting yourself.” And so I set up a Twitter feed, which turns out to suit me because it doesn’t require taking out hours of the day to write a blog. The majority of my tweets are links to interesting articles, which takes advantage of the breadth of articles that come my way — everything from controversies over correct grammar to trends in genocide.

Having once been a young person myself, I remember the vilification that was hurled at us baby boomers by the older generation. This reminds me that it is a failing of human nature to detest anything that young people do just because older people are not used to it or have trouble learning it. So I am wary of the “young people suck” school of social criticism. I have no patience for the idea that because texting and tweeting force one to be brief, we’re going to lose the ability to express ourselves in full sentences and paragraphs. This simply misunderstands the way that human language works. All of us command a variety of registers and speech styles, which we narrowcast to different forums. We speak differently to our loved ones than we do when we are lecturing, and still differently when we are approaching a stranger. And so, too, we have a style that is appropriate for texting and instant messaging that does not necessarily infect the way we communicate in other forums. In the heyday of telegraphy, when people paid by the word, they left out the prepositions and articles. It didn’t mean that the English language lost its prepositions and articles; it just meant that people used them in some media and not in others. And likewise, the prevalence of texting and tweeting does not mean that people magically lose the ability to communicate in every other conceivable way.

Q: Early in your career you wrote a number of important technical works. Do you find it more fun to write the broader appealing books?

A: Both are appealing for different reasons. In trade books I have the length to pursue objections, digressions, and subtleties, something that is hard to do in the confines of a journal article. I also like the freedom to avoid academese and to write in an accessible style — which happens to be the very topic of my forthcoming book, “The Sense of Style: The Thinking Person’s Guide to Writing in the 21st Century.” I also like bringing to bear ideas and sources of evidence that don’t come from a single discipline. In the case of my books on language, for example, I used not just laboratory studies of kids learning to talk, or studies of language in patients with brain damage, but also cartoons and jokes where the humor depends on some linguistic subtlety. Telling examples of linguistic phenomena can be found in both high and low culture: song lyrics, punch lines from stand-up comedy, couplets from Shakespeare. In “Better Angels,” I supplemented the main narrative, told with graphs and data, with vignettes of culture at various times in history, which I presented as a sanity check, as a way of answering the question, “Could your numbers be misleading you into a preposterous conclusion because you didn’t try to get some echo from the world as to whether life as it was lived reflects the story told by the numbers?” If, as I claim, genocide is not a modern phenomenon, we should see signs of it being treated as commonplace or acceptable in popular narratives. One example is the Old Testament, which narrates one genocide after another, commanded by God. This doesn’t mean that those genocides actually took place; probably most of them did not. But it shows the attitude at the time, which is, genocide is an excellent thing as long as it doesn’t happen to you.

I also find that there is little distinction between popular writing and cross-disciplinary writing. Academia has become so hyperspecialized that as soon as you write for scholars who are not in your immediate field, the material is as alien to them as it is to a lawyer or a doctor or a high school teacher or a reader of The New York Times.

Q: Were you a big reader as a teen? Can you think of one or two works you read early, fiction or nonfiction, where you came away impressed, even inspired, by the ideas, the craft, or both?

A: I was a voracious reader, and then as now, struggled to balance breadth and depth, so my diet was eclectic: newspapers, encyclopedias, a Time-Life book-of-the-month collection on science, magazines (including Esquire in its quality-essay days and Commentary in its pre-neocon era), and teen-friendly fiction by Orwell, Vonnegut, Roth, and Salinger (the intriguing Glasses, not the tedious Caulfield). Only as a 17-year-old in junior college did I encounter a literary style I consciously wanted to emulate — the wit and clarity of British analytical philosophers like Gilbert Ryle and A.J. Ayer, and the elegant prose of the Harvard psycholinguists George Miller and Roger Brown.

Q: Might we one day see a Steven Pinker book about horse racing or piano playing — or a Pinker novel? Is there a genre or off-work-hours interest you’ve thought seriously about putting book-length work into?

A: Whatever thoughts I might have had of writing a novel were squelched by marrying a real novelist [Rebecca Goldstein] and seeing firsthand the degree of artistry and brainpower that goes into literary fiction. But I have pondered other crossover projects. I’m an avid photographer, and would love to write a book someday that applied my practical experience, combined with vision science and evolutionary aesthetics, to explaining why we enjoy photographs. And I’ve thought of collaborating with Rebecca on a book on the psychology, philosophy, and linguistics of fiction — which would give me an excuse to read the great novels I’ve never found time for.

Q: You have won several teaching awards during your career. What makes a great teacher?

A: Foremost is passion for the subject matter. Studies of teaching effectiveness all show that enthusiasm is a major contributor. Also important is an ability to overcome professional narcissism, namely a focus on the methods, buzzwords, and cliques of your academic specialty, rather than a focus on the subject matter, the actual content. I don’t think of what I’m teaching my students as “psychology.” I think of it as teaching them “how the mind works.” They’re not the same thing. Psychology is an academic guild, and I could certainly spend a lot of time talking about schools of psychology, the history of psychology, methods in psychology, theories in psychology, and so on. But that would be about my clique, how my buddies and I spend our days, how I earn my paycheck, what peer group I want to impress. What students are interested in is not an academic field but a set of phenomena in the world — in this case the workings of the human mind. Sometimes academics seem not to appreciate the difference.

A third ingredient of good teaching is overcoming “the curse of knowledge”: the inability to know what it’s like not to know something that you do know. That is a lifelong challenge. It’s a challenge in writing, and it’s a challenge in teaching, which is why I see a lot of synergy between the two. Often an idea in one of my books will have originated from the classroom, or vice versa, because the audience is the same: smart people who are intellectually curious enough to have bought the book or signed up for the course but who are just not as knowledgeable about a particular topic as I am. The obvious solution is to “imagine the reader over your shoulder” or “to put yourself in your students’ shoes.” That’s a good start, but it’s not enough, because the curse of knowledge prevents us from fully appreciating what it’s like to be a student or a reader. That’s why writers need editors: The editors force them to realize that what’s obvious to them isn’t obvious to everyone else. And it’s why teachers need feedback, either from seeing the version of your content that comes back at you in exams, or in conversations with students during office hours, or in discussion sessions. Another important solution is being prepared to revise. Most of the work of writing is in the revising. During the first pass of the writing process, it’s hard enough to come up with ideas that are worth sharing. To simultaneously concentrate on the form, on the felicity of expression, is too much for our thimble-sized minds to handle. You have to break it into two distinct stages: Come up with the ideas, and polish the prose. This may sound banal, but I find that it comes as a revelation to people who ask about my writing process. It’s why in my SLS 20 class, the assignment for the second term paper is to revise the first term paper. That’s my way to impress on students that the quality comes in the revision.

Q: How do students differ today from when you were a student?

A: What a dangerous question! The most tempting and common answer is the thoughtless one: “The kids today are worse.” It’s tempting because people often confuse changes in themselves with changes in the times, and changes in the times with moral and intellectual decline. This is a well-documented psychological phenomenon. Every generation thinks that the younger generation is dissolute, lazy, ignorant, and illiterate. There is a paper trail of professors complaining about the declining quality of their students that goes back at least 100 years. All this means that your question is one that people should think twice before answering. I know a lot more now than I did when I was a student, and thanks to the curse of knowledge, I may not realize that I have acquired most of it during the decades that have elapsed since I was a student. So it’s tempting to look at students and think, “What a bunch of inarticulate ignoramuses! It was better when I was at that age, a time when I and other teenagers spoke in fluent paragraphs, and we effortlessly held forth on the foundations of Western civilization.” Yeah, right.

Here is a famous experiment. A 3-year-old comes into the lab. You give him a box of M&Ms. He opens up the box and instead of finding candy he finds a tangle of ribbons. He is surprised, and now you say to him, “OK, now your friend Jason is going to come into the room. What will Jason think is in the box?” The child says, “ribbons,” even though Jason could have no way of knowing that. And, if you ask the child, “Before you opened the box, what did you think was in it?” They say, “ribbons.” That is, they backdate their own knowledge. Now we laugh at the 3-year-old, but we do the same thing. We backdate our own knowledge and sophistication, so we always think that the kids today are more slovenly than we were at that age.

Q: What are some of the greatest things your students have taught you?

A: Many things. The most obvious is the changes in technology for which we adults are late adopters. I had never heard of Reddit, let alone knowing that it was a major social phenomenon, until two of my students asked if I would do a Reddit AMA [Ask Me Anything]. I did the session in my office with two of my students guiding me, kind of the way I taught my grandmother how to use this newfangled thing called an answering machine. That evening I got an email from my editor in New York saying: “The sales of your book just mysteriously spiked. Any explanation?” It was all thanks to Reddit, which I barely knew existed. Another is a kind of innocence — though that’s a condescending way to put it. It’s a curiosity about the world untainted by familiarity with an academic field. It works as an effective challenge to my own curse of knowledge. So if you want to know what it’s like not to know something that you know, the answer is not to try harder, because that doesn’t work very well. The answer is to interact with someone who doesn’t know what you know, but who is intelligent, curious, and open.

Q: If you weren’t in this field, what would you be doing?

A: Am I allowed to be an academic?

Q: You can be anything you want.

A: I could have been in some other field that deals with ideas, like philosophy or constitutional law. I have enough of an inner geek to imagine being a programmer, and for a time as an undergraduate that appealed to me. But as much as I like gadgets and code, I like ideas more, so I suspect that the identical twin separated from me at birth would also have done something in the world of ideas.

Q: No Steven Pinker interview would be complete without a question about your hair. I recently saw a picture of you from the 1970s, and your style appears unchanged. Why haven’t you gone for a shorter look?

A: First, there’s immaturity. Any boy growing up in the ’60s fought a constant battle with his father about getting a haircut. Now no one can force me to get my hair cut, and I’m still reveling in the freedom. Also, I had a colleague at MIT, the computer scientist Pat Winston, who had a famous annual speech on how to lecture, and one of his tips was that every professor should have an affectation, something to amuse students with. Or journalists, comedians, and wise guys. I am the charter member of an organization called The Luxuriant Flowing Hair Club for Scientists. The MIT newspaper once ran a feature on all the famous big-haired people I had been compared to, including Simon Rattle, Robert Plant, Spinoza, and Bruno, the guy who played the piano on the TV show “Fame.” When I was on The Colbert Report, talking about fear and security, he pulled out an electromagnetic wand and scanned my hair for concealed weapons. So it does have its purposes.

Interview was edited for length and clarity.